There is a well-known gap in AI development that the industry calls the "last mile problem" -- though "last marathon" would be more accurate. Building a demo that shows an LLM doing something impressive takes an afternoon. Building a production system that does the same thing reliably, at scale, within budget, with proper monitoring and graceful degradation takes months of serious engineering work.

At Pepla, we have built production AI pipelines across multiple domains -- call centre analytics, document processing, code review automation, and customer-facing conversational systems. The patterns that emerge are remarkably consistent regardless of the use case. This article covers the engineering work that separates a compelling demo from a system you can bet your business on.

The Data Pipeline: Your Foundation

Every AI system is a data system first. Before you think about prompts or models, you need to think about how data enters the system, how it is transformed, and how results are stored and served.

A production AI pipeline is a data system first -- input validation, chunking, and data flow architecture matter most.

Input Validation and Normalisation

Production data is messy in ways that demo data never is. Documents arrive in unexpected formats. Audio files have varying sample rates and encoding. Text contains unicode edge cases, injection attempts, and content that falls outside your expected domain. Your input layer needs to handle all of this gracefully.

A practical approach is to build a strict validation layer that rejects malformed inputs with clear error messages, a normalisation layer that converts valid inputs into a canonical format, and a classification layer that routes different input types to appropriate processing paths. Each of these should be independently testable and monitored.

Chunking and Context Management

Even with large context windows, you cannot feed unlimited data into a model. Documents need to be chunked intelligently -- preserving semantic coherence, maintaining section boundaries, and ensuring that related information stays together. For conversation analysis, you need to maintain context across multiple turns without exceeding token limits.

The chunking strategy is domain-specific and has a significant impact on output quality. A naive approach (split every N tokens) will cut sentences mid-thought and separate information that the model needs to see together. A well-designed chunking strategy understands the structure of your data and preserves the relationships that matter.

Data Flow Architecture

For most production AI pipelines, you need both synchronous and asynchronous processing paths. Synchronous for low-latency, user-facing interactions. Asynchronous (typically queue-based) for batch processing, heavy analysis, and non-time-sensitive tasks. The architecture should make it clear which path each type of work takes and handle the transitions between them.

The data pipeline is the part of the system that determines whether your AI works reliably at scale. The model is the part that gets all the attention. Do not confuse visibility with importance.

Prompt Management

In a prototype, your prompts live in your code. In production, this is untenable. Prompts need to be versioned, tested, rolled out gradually, and rolled back quickly. They are a critical configuration layer that changes more frequently than code.

Version Control

Every prompt should be versioned, with a clear history of what changed and why. At Pepla, we store prompts as structured documents (YAML or JSON) in version control alongside the code, but treat them as configuration rather than source code. Each prompt has a unique identifier, a version number, metadata about its purpose and expected behaviour, and a set of evaluation criteria.

Prompt Templates

Production prompts are rarely static strings. They are templates with dynamic sections: the system prompt defines behaviour and constraints, context sections are populated from your data pipeline, and the user input is injected at the appropriate point. Structuring prompts as composable templates with clear interfaces between sections makes them maintainable and testable.

A/B Testing Prompts

When you update a prompt, you want to know whether the new version performs better than the old one before rolling it out completely. This requires infrastructure to route a percentage of traffic to the new prompt version, collect results from both versions, and compare them against your evaluation criteria. This is conceptually identical to A/B testing in web development, but the metrics are different -- you are comparing output quality rather than click-through rates.

The data pipeline determines reliability at scale. The model just gets the attention.

Evaluation Frameworks

This is where most organisations underinvest, and it is arguably the most important component of a production AI system. If you cannot measure output quality systematically, you cannot improve it, and you cannot detect when it degrades.

Building Evaluation Datasets

An evaluation dataset is a collection of inputs paired with expected outputs (or quality criteria). Building a good evaluation dataset requires domain expertise and is time-consuming. It is also non-negotiable for production deployment.

Start with at least 100-200 representative examples that cover the range of inputs your system will encounter. Include edge cases, adversarial inputs, and examples from underrepresented categories. For each example, define what a good output looks like -- this can be an exact expected answer, a set of criteria the output must satisfy, or a reference output for comparison.

Automated Evaluation

Some quality dimensions can be evaluated programmatically. Does the output conform to the expected JSON schema? Does it contain required fields? Are extracted values numerically correct? Is the response within acceptable length bounds? Build automated checks for everything that can be objectively evaluated.

For subjective quality dimensions (Is the summary accurate? Is the tone appropriate? Is the analysis insightful?), you have two options: human evaluation, which is accurate but expensive and slow, or LLM-as-judge, where you use a model to evaluate the output of another model. The latter is increasingly reliable for well-defined criteria and is practical for continuous evaluation.

Evaluation in CI/CD

Your evaluation suite should run as part of your deployment pipeline. Before a new prompt version, model update, or code change reaches production, it should pass evaluation against your benchmark dataset. This is the AI equivalent of a test suite, and it should be treated with the same rigour.

You would not deploy code without running tests. Do not deploy prompt changes without running evaluations.

Monitoring in Production

AI systems degrade in ways that traditional software does not. A conventional application either works or throws an error. An AI system can produce subtly wrong outputs that look correct -- lower quality summaries, slightly inaccurate extractions, gradually drifting tone -- without any error being raised. Monitoring must account for this.

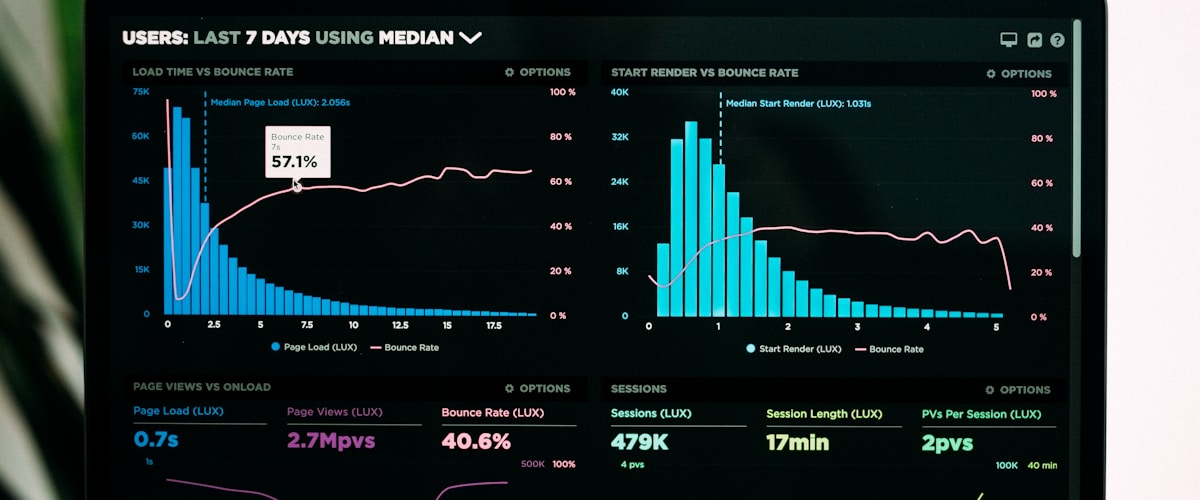

Operational Metrics

Standard infrastructure monitoring applies: latency (per request and end-to-end pipeline), throughput, error rates, queue depths, and resource utilisation. These tell you whether the system is running. They do not tell you whether it is producing good results.

Quality Metrics

Quality monitoring requires running evaluation checks on a sample of production outputs. This can be automated (schema conformance, required fields, length bounds) or semi-automated (LLM-as-judge evaluation on a random sample, flagged for human review when scores drop below a threshold). Track quality metrics over time and alert on degradation.

Drift Detection

If the distribution of your input data changes -- new types of documents, different customer demographics, seasonal variations in call topics -- your system's performance may change even if nothing in the system itself has changed. Monitor input characteristics (document length distribution, language mix, topic distribution) and correlate changes with quality metrics.

Cost Monitoring

LLM API calls cost money. In a production pipeline processing thousands or millions of items, costs can escalate quickly and unpredictably. Monitor per-request costs, daily aggregate costs, and cost-per-item for your core workflows. Set alerts for cost anomalies -- a sudden spike usually indicates a bug (infinite retry loops, malformed inputs generating excessive tokens) rather than legitimate traffic growth.

Never deploy prompt changes without running evaluations -- treat them like code deployments.

Cost Management

Cost management in AI pipelines is a discipline unto itself. Several strategies are effective in practice.

Model Tiering

Not every task requires a frontier model. Route simple, high-volume tasks to smaller, cheaper models. Reserve expensive models for complex tasks where the quality difference justifies the cost. A well-designed routing layer can reduce costs by 60-80% compared to running everything through a single high-end model.

Caching

If the same or very similar inputs appear frequently, cache the results. Exact-match caching is straightforward. Semantic caching (recognising that a new input is sufficiently similar to a cached input that the cached result is valid) is more complex but can yield significant savings for systems with repetitive query patterns.

Prompt Optimisation

Shorter prompts cost less. Review your prompts for unnecessary verbosity. A concise system prompt that achieves the same output quality as a verbose one can reduce per-request costs by 20-40%. This is another reason prompt management matters -- you need to measure whether a shorter prompt degrades quality before deploying it.

Batch Processing

Where latency is not critical, batch API calls offer significant cost savings. Most providers offer batch endpoints at 50% or greater discounts compared to real-time API calls. Structure your pipeline to accumulate non-urgent work and process it in batches.

Fallback Strategies

AI systems fail. Models return errors, rate limits are hit, quality degrades on unusual inputs. Your system needs to handle these failures gracefully.

Model Fallbacks

If your primary model is unavailable, can you fall back to an alternative? This requires that your prompt architecture is portable across models (or that you maintain model-specific prompt variants). Test your fallback regularly -- a fallback path that has not been exercised in months is unlikely to work when you need it.

Graceful Degradation

Define what your system does when AI processing fails entirely. Can you return a partial result? Can you queue the item for later processing? Can you fall back to a rule-based system for critical functionality? The answer depends on your domain, but the question must be answered before you reach production.

Human-in-the-Loop Escalation

For high-stakes decisions, build an escalation path to human review. Define clear criteria for when automated processing is insufficient and route those cases to a human queue. This is not a failure of the AI system -- it is a design feature that keeps the overall system reliable.

A/B Testing LLM Outputs

Continuously improving an AI pipeline requires the ability to test changes safely. A/B testing infrastructure for AI pipelines has several specific requirements.

- Traffic splitting that routes a controlled percentage of requests to a variant while the majority continues through the current version.

- Consistent assignment so that a given input always hits the same variant for the duration of the test, enabling clean comparisons.

- Quality metrics collection for both variants, using your evaluation framework to score outputs.

- Statistical significance testing to determine when you have enough data to declare a winner, accounting for the typically high variance of LLM outputs.

- Quick rollback if a variant performs significantly worse than the baseline.

Putting It All Together

A production AI pipeline is not a model with an API wrapper. It is a software system with the same engineering requirements as any other production system -- plus additional requirements around evaluation, quality monitoring, and cost management that are specific to AI.

The organisations that successfully bridge the prototype-to-production gap are the ones that treat AI engineering as engineering, not as data science with a deployment step bolted on. They invest in testing infrastructure, monitoring, and operational tooling with the same seriousness they bring to their core application code.

This is exactly the gap Pepla bridges for clients -- taking AI prototypes and engineering them into production-grade systems with proper monitoring, fallback strategies, and cost management.

At Pepla, our production AI systems typically involve more engineering around the pipeline -- data handling, evaluation, monitoring, cost management, fallbacks -- than around the AI model itself. The model is the engine. Everything else is what makes it safe to drive.

The model is the engine. Everything else -- evaluation, monitoring, fallbacks -- is what makes it safe to drive.

Checklist for Production Readiness

- Input validation and normalisation handles all expected (and unexpected) data formats.

- Prompts are versioned, tested, and can be rolled back independently of code deployments.

- An evaluation dataset exists with at least 100 representative examples and clear quality criteria.

- Evaluation runs automatically before any prompt or model change reaches production.

- Operational monitoring covers latency, throughput, error rates, and costs.

- Quality monitoring samples production outputs and alerts on degradation.

- Cost management includes model tiering, caching where appropriate, and budget alerts.

- Fallback strategies are defined, implemented, and regularly tested.

- Human escalation paths exist for cases the AI cannot handle reliably.

- A/B testing infrastructure allows safe testing of changes before full rollout.