If you are an executive or business leader evaluating AI automation, you have probably seen two kinds of presentations. The first is the vendor pitch, full of transformative promises and cherry-picked case studies that make ROI look inevitable. The second is the engineering team's honest assessment, which is more cautious and harder to translate into a business case your board can evaluate. This article aims to bridge those two perspectives with a practical framework for evaluating AI automation investments.

The ROI Calculation Framework

Calculating return on investment for AI automation requires accounting for costs and benefits that do not appear in traditional software project estimates. Here is a framework that covers the relevant dimensions.

Development cost is typically less than half the total -- budget for infrastructure, operations, and change management too.

Cost Components

Total cost of an AI automation project typically falls into four categories:

- Development costs. Building the system: engineering time, design, architecture, integration with existing systems. This is the cost most people estimate. It is usually 30-40% of the total cost of ownership.

- Infrastructure and API costs. Cloud hosting, model API fees, data storage, and processing. Unlike traditional software where infrastructure costs are relatively predictable, AI systems have variable costs that scale with usage. A document processing system that handles 1,000 documents per day has fundamentally different running costs from one that handles 100,000.

- Ongoing operations. Monitoring, prompt maintenance, evaluation dataset updates, model migrations when providers release new versions, handling edge cases that emerge in production. This is the cost most people underestimate. Plan for ongoing operational effort equivalent to 15-25% of initial development cost per year.

- Change management. Training staff to work with the new system, adjusting workflows, managing the transition period where old and new processes run in parallel, and addressing organisational resistance. This cost is invisible in a technology budget but real in practice.

Benefit Components

Benefits of AI automation are not limited to direct cost savings. A comprehensive assessment includes:

- Labour cost reduction. The most straightforward benefit: tasks that required human effort are now automated. Calculate this by measuring the current cost of the task (hours x fully loaded cost per hour) and estimating the percentage that automation will handle. Be conservative -- in our experience, most AI automation handles 60-80% of volume autonomously, with the remainder requiring human involvement.

- Quality improvement. Quantify the cost of errors in the current process. If manual data entry has a 3% error rate and each error costs R500 to correct, the annual error cost is calculable. AI automation typically reduces error rates significantly for structured tasks.

- Speed improvement. If processing time matters to revenue -- faster loan approvals, quicker customer responses, shorter time-to-market -- the value of speed can be substantial. Calculate this in terms of revenue impact, not just efficiency.

- Scale enablement. Can the business handle 10x the current volume without proportionally increasing headcount? If growth is constrained by processing capacity, automation removes that constraint. The value of this depends on your growth trajectory.

- Opportunity cost recovery. What could your skilled employees do if they were not performing the tasks being automated? If automating compliance checking frees up analysts to do higher-value advisory work, the benefit includes the value of that advisory work, not just the cost of the checking.

The most significant ROI from AI automation often comes not from eliminating costs, but from enabling activities that were previously impossible or impractical at the current scale.

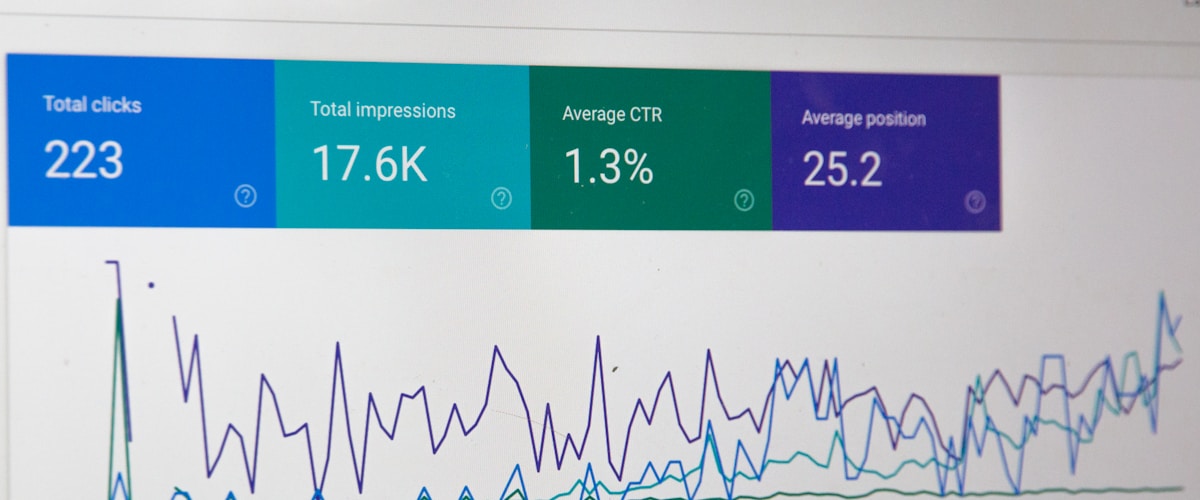

Cost Per API Call vs Human Labour

One of the most common questions in AI business cases is the unit economics: how does the cost of an AI API call compare to human labour for the same task?

The numbers in 2026 are striking. A typical document analysis task -- extracting key fields from an invoice, summarising a contract, classifying a support ticket -- costs roughly R0.05 to R0.50 per document when processed through an LLM API, depending on document length and model choice. The same task performed by a human employee costs R15-R50 per document when you factor in fully loaded labour costs, quality checking, and management overhead.

This represents a 30-100x cost advantage for the AI approach on a per-unit basis. However, the per-unit comparison is misleading if you do not account for the full system cost. The AI approach requires development, infrastructure, monitoring, and ongoing maintenance. These fixed and semi-variable costs must be amortised across the volume of items processed.

The break-even calculation is straightforward: divide the total annual cost of the AI system (development amortised over its useful life, plus annual operational costs) by the per-unit cost saving. The result is the annual volume at which the AI system pays for itself. For most commercial applications, this break-even point is reached at surprisingly low volumes -- often in the thousands of items per month, not millions.

AI processes documents at 30-100x lower cost per unit than manual labour.

Implementation Timelines

Setting realistic expectations for implementation timeline is critical. Here is what we typically see across client engagements at Pepla:

Phase 1: Proof of Concept (2-4 weeks)

Build a working prototype that demonstrates the AI approach on representative data. This is fast and relatively cheap. It answers the question: "Can AI handle this task at an acceptable quality level?"

Phase 2: Pilot (6-10 weeks)

Build a production-quality system handling a subset of real data in a controlled environment. Develop evaluation frameworks. Measure accuracy, speed, and cost against the current process. Identify edge cases. This phase answers: "Does the quality hold up on real data, and what does the production system actually require?"

Phase 3: Production Deployment (8-14 weeks)

Build the full production pipeline: input handling, error recovery, monitoring, integration with existing systems, human escalation paths, cost management. Roll out gradually, starting with low-risk items and expanding as confidence grows. This phase is where most of the engineering effort concentrates.

Phase 4: Optimisation (Ongoing)

Tune prompts, optimise costs through model tiering and caching, expand coverage to additional task types, and address edge cases that emerge from production usage. This phase never truly ends -- it transitions into ongoing operations.

Total timeline from decision to full production deployment: typically 4-7 months for a well-scoped project. The most common cause of delays is scope creep -- trying to automate too many variations in the first release rather than starting with the high-volume, well-defined cases and expanding from there.

Risk Assessment

Every business case should include an honest assessment of risks. For AI automation, the significant risks include:

Quality Risk

AI output quality is probabilistic, not deterministic. Even a system with 95% accuracy will produce incorrect results 5% of the time. For high-stakes decisions -- financial transactions, medical assessments, legal compliance -- this error rate may be unacceptable without human oversight. Mitigation: design the system with human-in-the-loop checkpoints for high-risk decisions, and invest in monitoring to detect quality degradation early.

Vendor Dependency

Most AI systems depend on third-party model APIs. Pricing changes, service interruptions, or changes in model behaviour when providers release updates can impact your system without any change on your side. Mitigation: abstract your AI integration behind an interface that supports multiple providers, and maintain the ability to switch models.

Regulatory Risk

AI regulation is evolving rapidly. Requirements that do not exist today may be mandated next year. Automated decisions affecting individuals may require explainability, consent, or human review under frameworks like POPIA, GDPR, or the EU AI Act. Mitigation: build with compliance in mind from the start. Document your AI decision-making processes and maintain the ability to provide human review for any automated decision.

Adoption Risk

The technology works, but the organisation does not adopt it. Employees bypass the system, revert to manual processes, or use it incorrectly. This is the most common cause of AI project failure and the most often overlooked in business cases. Mitigation: invest in change management, involve end users in design, and demonstrate clear personal benefit (not just organisational efficiency) to the people whose workflows will change.

The greatest risk in AI automation is not that the technology fails. It is that the organisation fails to adopt it. Budget for change management or budget for failure.

Budget for change management or budget for failure -- adoption risk kills more AI projects than technology.

Change Management

Successful AI automation requires deliberate change management. The principles are well-established but frequently ignored:

- Involve stakeholders early. The people whose work will be affected should participate in defining how the automation works. They understand the edge cases, the exceptions, and the reasons certain processes exist in their current form.

- Communicate transparently. Address the obvious concern: "Will this replace my job?" In most cases, the honest answer is that it changes the job, not eliminates it. Be specific about what will change and what will not.

- Deploy gradually. Start with a subset of work, run AI and human processes in parallel, and build confidence before expanding. The first AI output error in a full-scale deployment creates distrust that takes months to rebuild.

- Measure and share results. Publish metrics that show the impact -- not just cost savings (which can feel threatening) but quality improvements, time saved for higher-value work, and capacity created for growth.

- Iterate based on feedback. The first deployment will not be perfect. Create clear channels for users to report issues and demonstrate that their feedback results in improvements.

Measuring Success

Define success metrics before you begin, not after deployment. A robust measurement framework includes:

- Efficiency metrics. Processing time per item, throughput (items per hour/day), and human time spent per item (including oversight and correction).

- Quality metrics. Accuracy rate, error rate by type, customer satisfaction scores for customer-facing automation, and compliance adherence rate.

- Financial metrics. Cost per item processed, total monthly operating cost, cost savings versus the previous process, and payback period tracking.

- Adoption metrics. Percentage of eligible items processed through the automated system, user satisfaction scores, and support ticket volume related to the system.

- Business impact metrics. Revenue enabled by increased capacity, customer satisfaction changes, time-to-market improvements, and competitive advantages gained.

Track these monthly and review them quarterly against your business case projections. The data will tell you whether to expand, optimise, or reconsider your approach.

Build the business case on conservative estimates -- if it works at 60% automation, you have headroom.

Practical Recommendations

- Start with a high-volume, well-defined process where the current cost is known and measurable. Avoid ambiguous, judgment-heavy processes for your first AI project.

- Budget for the full lifecycle: development, deployment, operations, and change management. The development cost is typically less than half the total.

- Set realistic expectations. AI automation rarely delivers 100% automation. Plan for 60-80% automation with human handling of the remainder, and optimise from there.

- Build the business case on conservative estimates. If the numbers work at 60% automation and 85% accuracy, you have headroom. If they only work at 95% automation and 99% accuracy, the risk is too high.

- Engage an implementation partner with production AI experience. The gap between a proof of concept and a production system is where most projects fail, and the engineering required is specific to AI systems.