Building a voice AI system that works in a demo is straightforward. Building one that handles thousands of real phone calls per day, with real humans who mumble, interrupt, speak in noisy environments, and have zero patience for robotic responses, is an entirely different challenge.

Pepla Voice is our production voice automation platform. It handles inbound and outbound calls for clients in financial services, healthcare, and customer support. This article shares the engineering lessons we learned the hard way: the architecture decisions that worked, the ones that did not, and the production realities that no tutorial prepares you for.

Speech-to-Text: The First 500 Milliseconds

Everything in a voice pipeline starts with converting speech to text. The quality and speed of your speech-to-text (STT) component determines the ceiling for your entire system. If the transcription is wrong, nothing downstream can fix it.

Every 100ms of latency measurably reduces satisfaction -- target under 800ms from speech to AI response.

We evaluated every major STT provider during development. The key dimensions are accuracy, latency, language support, and cost. For South African English and Afrikaans, which are critical for our market, the landscape was initially challenging. Most models were trained primarily on American and British English.

Our STT Journey

We started with Whisper, which offered excellent accuracy but unacceptable latency for real-time conversation. Even with the large-v3 model running on an A100 GPU, the processing time for a typical utterance was 800ms to 1.2 seconds. In a phone conversation, that delay is immediately noticeable and deeply unnatural.

We moved to Deepgram's Nova-2 model for production, which offered streaming transcription with sub-200ms latency. The accuracy was slightly lower than Whisper on our test set, but the latency improvement transformed the user experience. In 2026, we have also integrated options for AssemblyAI's Universal-2 model, which has closed the accuracy gap while maintaining competitive streaming latency.

The single most important metric in voice AI is time-to-first-response. Every 100ms of additional latency measurably reduces user satisfaction and increases hang-up rates. We target under 800ms from end-of-user-speech to start-of-AI-speech.

LLM Latency Management

Once you have the transcript, the LLM needs to generate a response. In a text-based chatbot, users accept a second or two of thinking time. In a voice conversation, silence is death. More than 1.5 seconds of silence and callers start saying "Hello? Are you there?"

Streaming Is Non-Negotiable

The LLM must stream its response token by token. As soon as the first few tokens arrive, you start text-to-speech synthesis. This means the caller hears the beginning of the response while the LLM is still generating the rest. Effective streaming can cut perceived latency by 60-70%.

Model Selection for Voice

We use different models for different complexity levels. Simple intent classification and FAQ responses use a smaller, faster model. Complex reasoning, multi-step workflows, and escalation decisions use a more capable model. This tiered approach keeps average latency low while preserving quality for hard cases.

The prompt engineering for voice is also different from text. Responses must be concise, conversational, and structured for spoken delivery. Long paragraphs that work in a chatbot are terrible when read aloud. We instruct the model to use short sentences, avoid jargon, and never produce markdown or formatting characters.

The first 500ms of speech-to-text latency determines whether a voice AI feels natural or robotic.

Text-to-Speech: Making It Sound Human

Text-to-speech (TTS) has improved dramatically. The gap between synthetic and human speech has narrowed to the point where many callers cannot tell the difference in short interactions. But "good enough" in a demo and "good enough" for a 10-minute customer service call are very different bars.

Natural Prosody and Emotion

Modern TTS engines from ElevenLabs, PlayHT, and OpenAI support emotional control and natural prosody. The AI voice needs to sound empathetic when a customer is frustrated, professional when discussing financial matters, and warm when greeting a caller. We control this through a combination of prompt instructions (which influence the text the LLM generates) and TTS-specific style parameters.

Pronunciation and Domain Vocabulary

Every domain has words that TTS engines mispronounce. Medical terms, South African place names, Afrikaans surnames, product codes, and abbreviations all need custom pronunciation dictionaries. We maintain per-client pronunciation lexicons that map problem words to their phonetic representations.

This seems like a minor detail until a caller hears the AI mangle their name or their medication. Trust evaporates instantly.

Telephony Integration: SIP and WebRTC

Voice AI does not exist in isolation. It needs to integrate with existing telephony infrastructure, which means SIP trunks, PBX systems, IVR flows, and call recording.

SIP for Traditional Telephony

Most enterprise call centres run on SIP-based infrastructure. Our platform connects via SIP trunks to providers like Twilio, Vonage, and local South African telcos. SIP integration brings its own challenges: codec negotiation, NAT traversal, DTMF handling, and the occasional provider that does not quite follow the RFC.

WebRTC for Modern Channels

For web-based and app-based voice interactions, we use WebRTC. This provides lower latency than SIP (no PSTN hop), better audio quality (Opus codec), and native browser support. The tradeoff is that it requires the caller to have a data connection, which makes it unsuitable for traditional phone calls.

In practice, most of our deployments use SIP for inbound call centre traffic and WebRTC for web widget and mobile app integrations.

Always design a graceful fallback to human agents -- voice AI must fail elegantly.

Handling Interruptions

Humans interrupt each other constantly during conversation. It is a natural part of communication. If your voice AI cannot handle interruptions gracefully, callers will find it infuriating.

We implement barge-in detection using voice activity detection (VAD) on the caller's audio stream. When the caller starts speaking while the AI is talking, we:

- Immediately stop TTS playback

- Cancel any in-progress LLM generation

- Wait for the caller to finish their utterance

- Process the new input with context from the interrupted response

The tricky part is distinguishing between a genuine interruption and background noise, a cough, or an "uh-huh" acknowledgment. Aggressive barge-in detection causes the AI to stop mid-sentence every time there is ambient noise. Conservative detection makes the AI seem oblivious to the caller trying to redirect the conversation.

We settled on a two-stage approach: a fast VAD triggers initial audio capture, and a lightweight classifier determines whether the captured audio is a genuine interruption or noise. This reduced false barge-ins by 78% compared to VAD alone.

Fallback to Human Agents

No voice AI system should operate without the ability to hand off to a human agent. The question is not whether you need escalation, but when and how to trigger it.

Our escalation triggers include:

- Explicit request: The caller says "Let me speak to a person." This must always be honoured immediately.

- Sentiment deterioration: Real-time sentiment analysis detects rising frustration. If the sentiment score drops below a threshold for two consecutive turns, we offer a transfer.

- Confidence thresholds: When the AI's confidence in its understanding or response drops below acceptable levels, it is better to escalate than to guess.

- Loop detection: If the conversation circles back to the same topic three times without resolution, the issue is beyond the AI's capability.

- Regulatory requirements: Certain actions (identity verification, financial authorisations, medical advice) require human involvement by regulation.

The handoff itself must be seamless. The human agent should receive the full conversation transcript, the AI's assessment of the caller's issue, and any data collected during the call. Nobody should have to repeat themselves.

Monitoring Voice Quality in Production

Voice systems fail in ways that text systems do not. Audio quality degrades, latency spikes, and transcription accuracy drops, all without producing a traditional error log.

Metrics We Track

- Time-to-first-byte (TTFB): Measured at each stage of the pipeline (STT, LLM, TTS). Alerts fire if any stage exceeds its latency budget.

- Word error rate (WER): Sampled transcriptions are compared against human transcription. We maintain a target WER below 8% across all accents we support.

- Task completion rate: What percentage of calls achieve their intended outcome without escalation?

- Caller satisfaction: Post-call surveys and sentiment analysis provide direct quality feedback.

- Hang-up rate: Callers hanging up mid-conversation is the strongest signal that something is wrong.

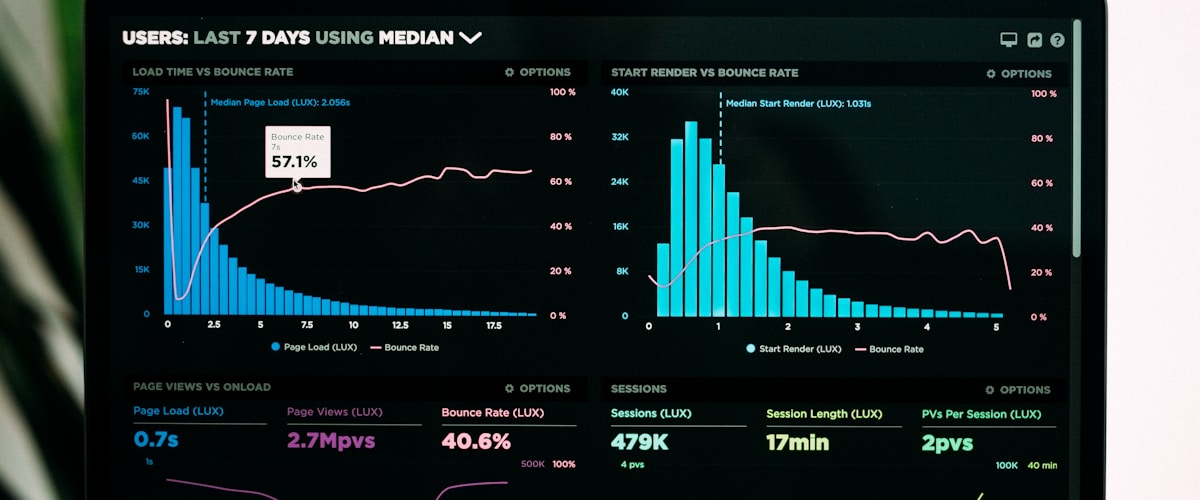

The Dashboard That Saved Us

We built a real-time monitoring dashboard that shows every active call with its current latency metrics, sentiment score, and conversation flow. When we first launched, this dashboard was how we discovered that our TTS provider had a regional outage that affected 30% of calls. Without real-time visibility, we would not have caught it for hours.

The first five seconds determine the entire call -- a natural greeting earns the AI leeway for everything after.

Lessons Learned

After eighteen months of running Pepla Voice in production, here are the lessons that shaped our architecture:

- Latency is the product. Users will tolerate minor inaccuracies but will not tolerate delays. Optimise for speed first, accuracy second.

- Test with real phone audio, not studio recordings. Background noise, speakerphone echo, Bluetooth artifacts, and poor mobile signal all degrade audio quality in ways that clean test data does not reveal.

- South African accents are underserved. Every STT model needs additional evaluation and often fine-tuning for local accents and languages.

- The first five seconds determine the entire call. If the greeting is natural and responsive, callers give the AI much more leeway for the rest of the conversation.

- Build for graceful degradation. When any component fails, the system should fall back, not crash. If TTS is slow, use pre-recorded filler phrases. If the LLM is down, route to a human immediately.

Voice AI in 2026 is at an inflection point. The technology is finally good enough for production use across most customer service scenarios. But getting from "good enough" to "genuinely excellent" requires careful engineering at every layer of the stack, relentless focus on latency, and a monitoring infrastructure that catches problems before your callers do.